Product Name

$0

$0

-

Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

+

Success message won't be visible to user. Coupon title will be listed below if it's valid.

Coupon1

Coupon2

Subtotal

$0

Order Discount

-$0

COUPON2

-$0

Total

$0

Neurable

In The News

Kelly Knez

neurable@berlinrosen.comFeatured News

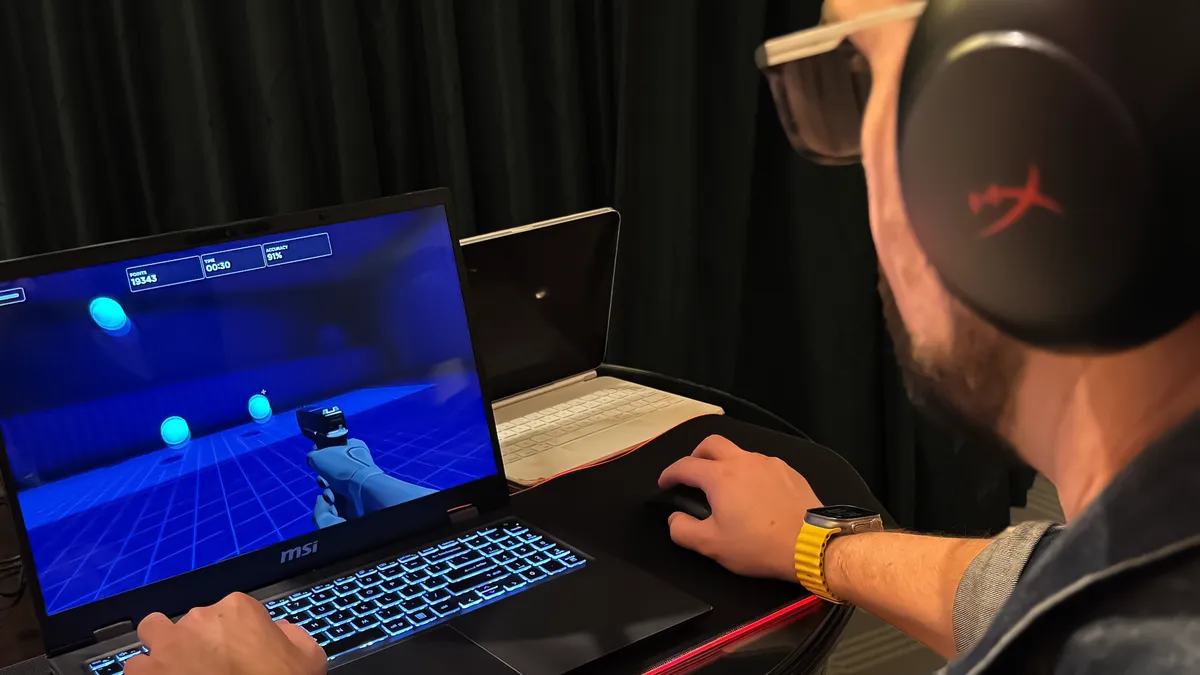

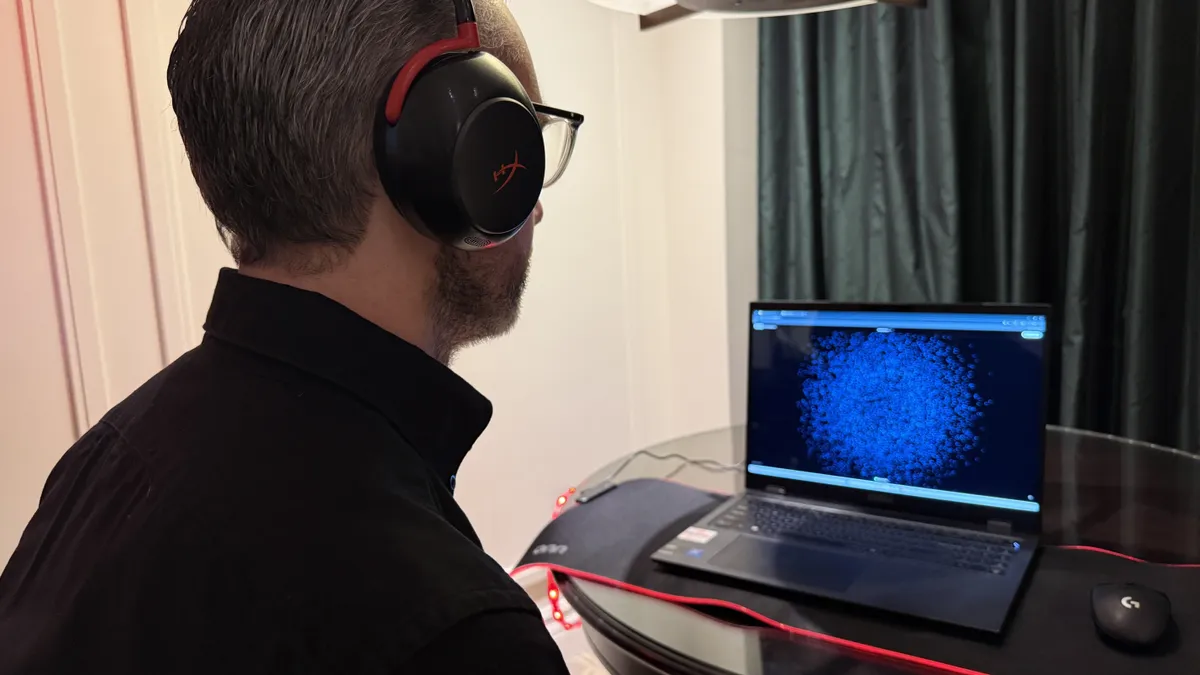

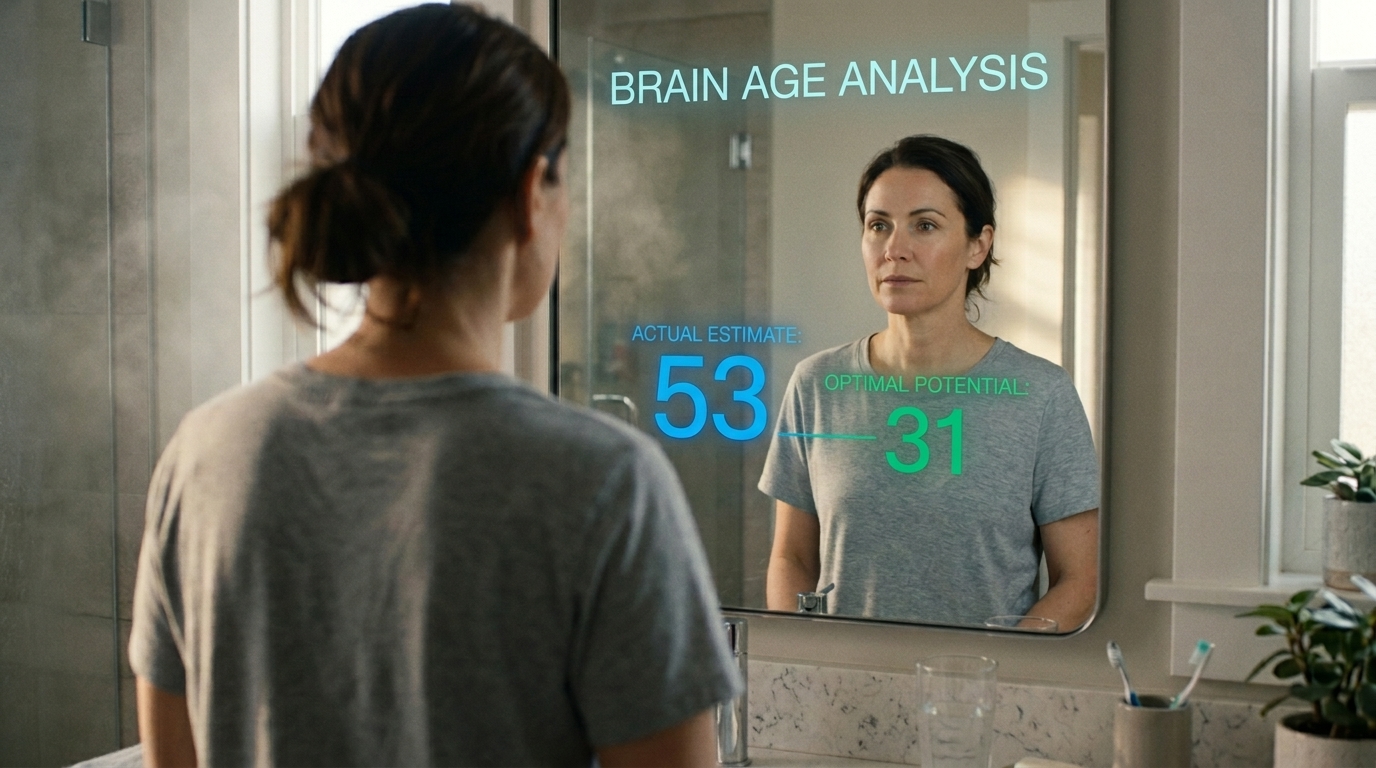

Innovation in BCI

January 20, 2026